Analyzing Replica Inconsistency in ClickHouse

环境信息

1 | clickhouse version: 24.10.x |

问题描述

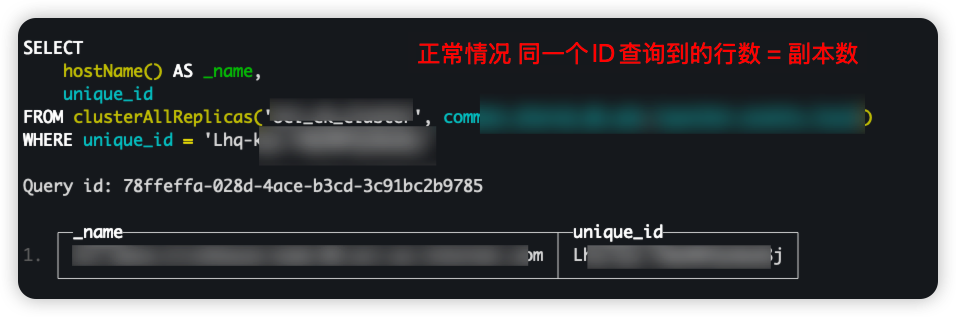

执行SQL统计时,对历史数据进行统计时发现SQL多次执行的结果不幂等。

1 | -- 定位问题 |

排查

分片2的2个副本的数据不一致,说明分片间数据同步有问题。

一个副本有数据,另一个则没有,可能是其中一个副本节点在fetch Entity环节出现问题了(Clickhouse 提供的最终一致性Eventual consistency)

1 | 数据写入 --> part Entitiy |

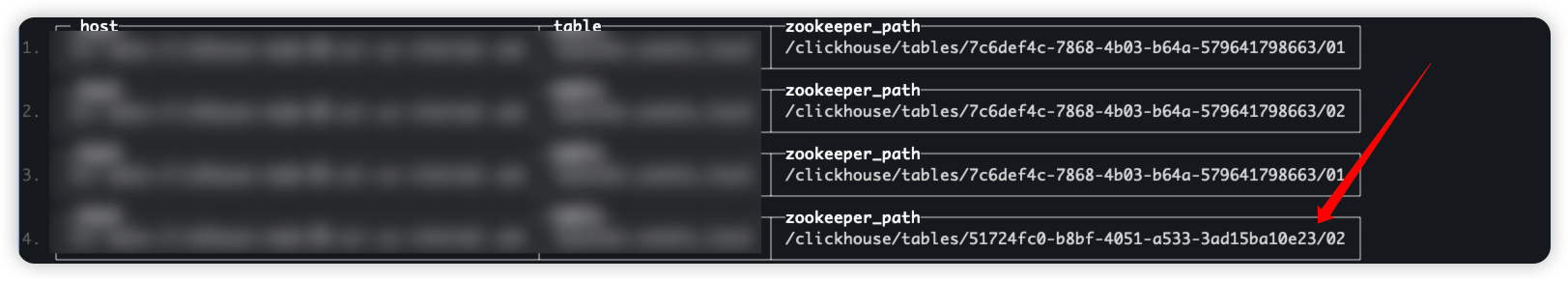

查看replicate zookeeper path:

1 | SELECT |

2个副本的zk path不一致:

/clickhouse/tables/51724fc0-b8bf-4051-a533-3ad15ba10e23/02

/clickhouse/tables/7c6def4c-7868-4b03-b64a-579641798663/02

解决问题

方案A-修复副本zk path

需要-暂停该的所有数据写入的情况下操作

准备工作

1 | --备份数据 |

修复副本 zk path

1 | # on node-05 |

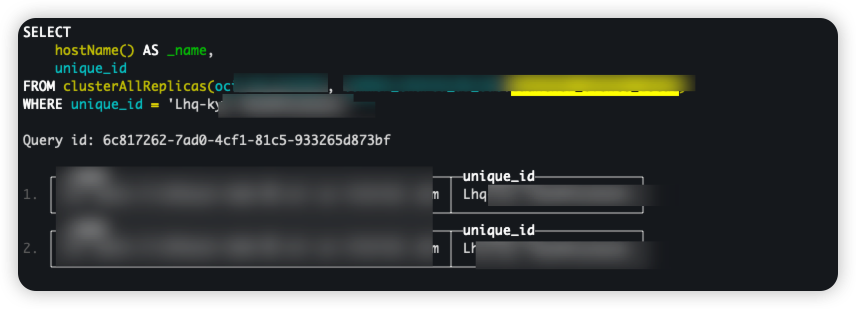

重建表后,数据会自动同步

1 | -- 检验数据同步状态 |

将备份表回写

1 | -- node 05 执行 |

- 检查数据同步状态

1 | --检查同步状态,没有查询到结果说明数据已经同步 |

修复后验证之前的问题

1 | select hostName() AS _name ,`unique_id` |

重建表

暂停表的数据写入,开始备份数据 适用场景:表数据规模较小的情况(比如 10G 以内)

导致问题的原因

1 | -- CREATE table |

默认的ReplicatedMergeTree会转换为ReplicatedMergeTree('/clickhouse/tables/{uuid}/{shard}', '{replica}') 。

每次DDL执行uuid都是不一样,这导致同一个在多个节点上多次执行 zk path 完全不一样。

- 建议使用data_base + table_name 命名zk path

- 使用

ENGINE = ReplicatedMergeTree('/clickhouse/tables/{shard}/{your_database_name}/{your_table_name}', '{replica}')· CREATE table前需要CREATE table IF NOT EXISTS xxx

参考

- Clickhouse Data replication https://clickhouse.com/docs/academic_overview#3-6-data-replication

- Title: Analyzing Replica Inconsistency in ClickHouse

- Author: Ordiy

- Created at : 2025-07-10 15:40:38

- Updated at : 2026-03-23 15:48:10

- Link: https://ordiy.github.io/posts/2025-06-01-Analyzing-Replica-Inconsistency-in-ClickHouse/

- License: This work is licensed under CC BY-NC-SA 4.0.

Comments